SKA Dish LMC Deployment

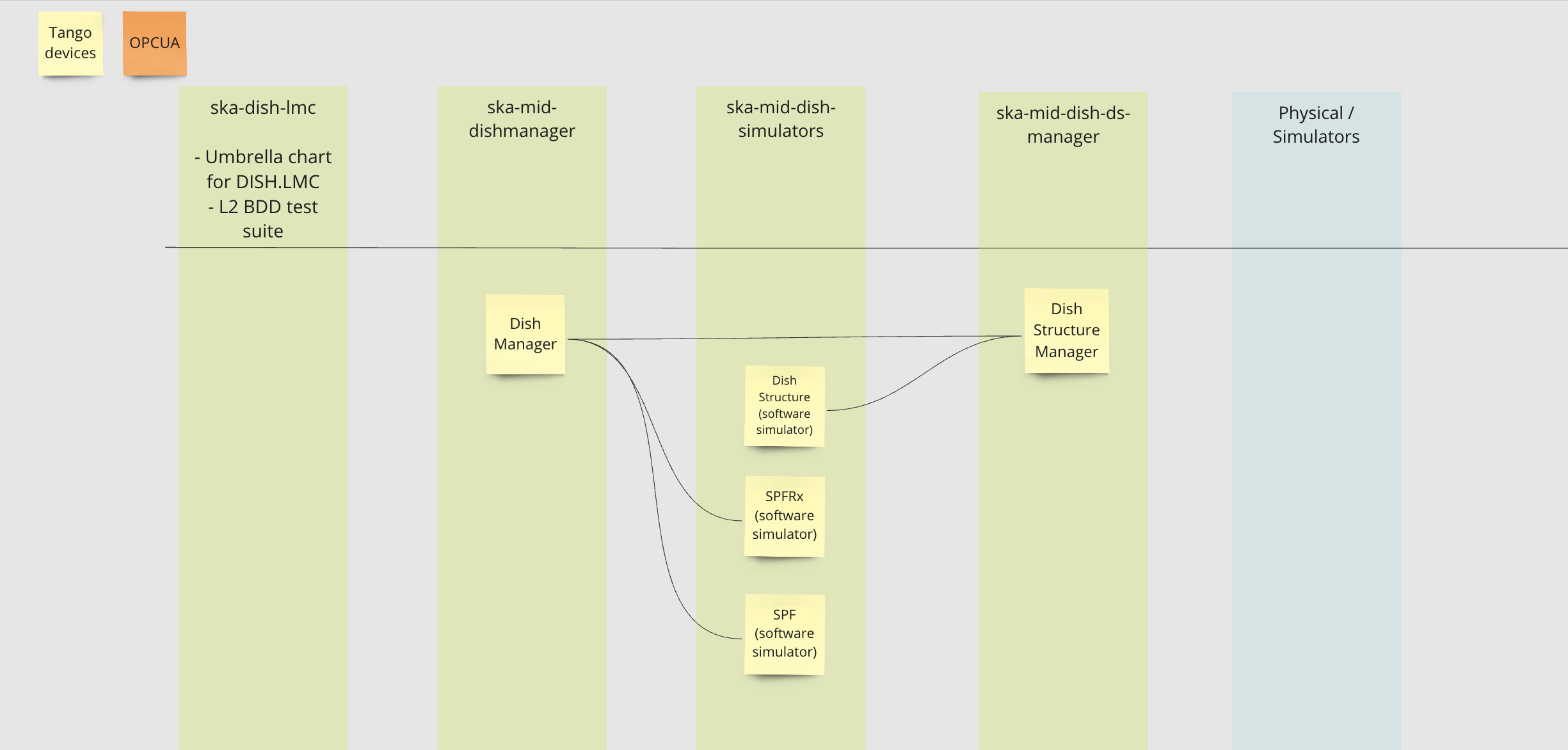

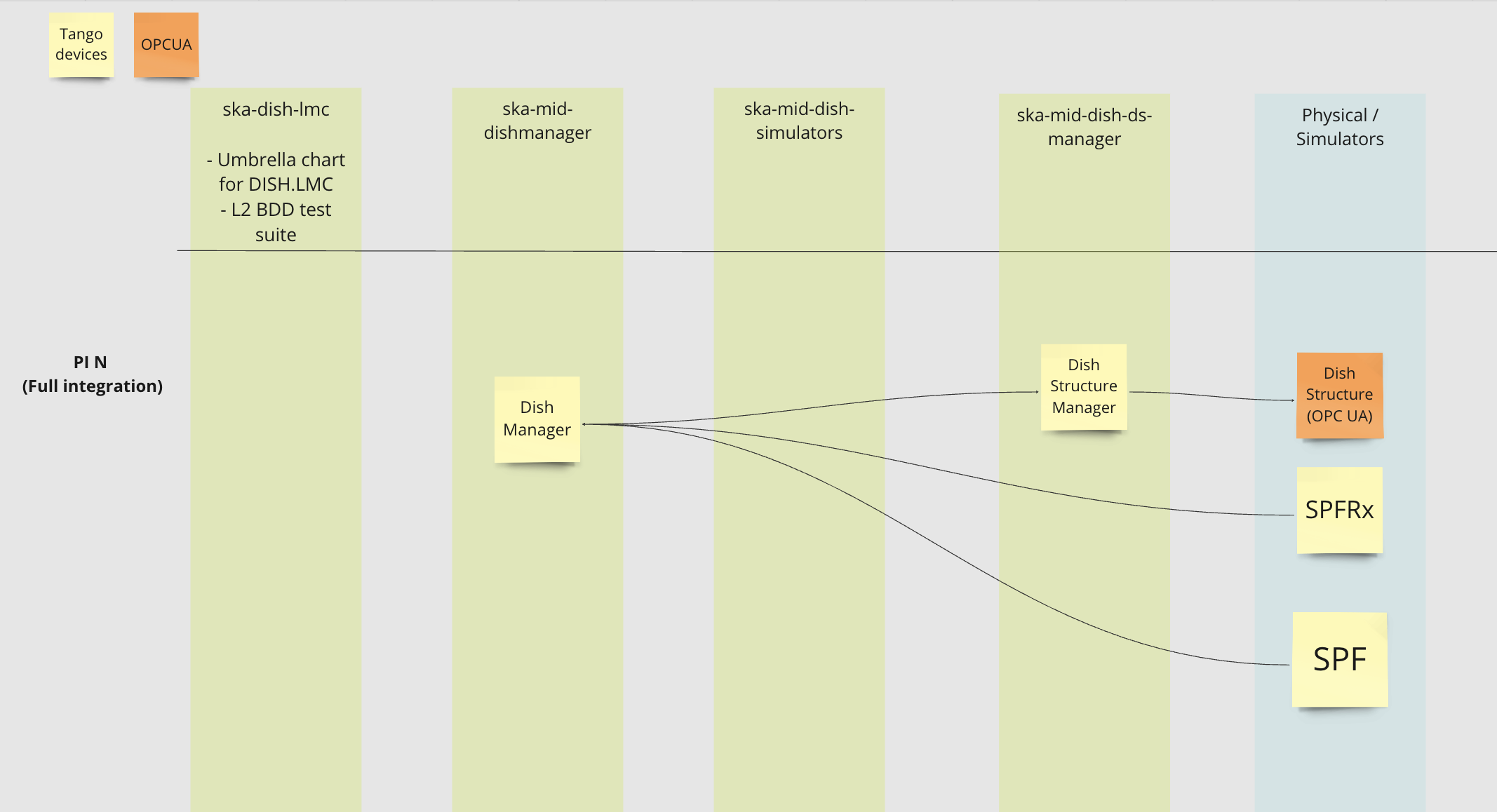

The Dish LMC repository serves as an umbrella environment which deploys the chart of DishManager, DSMananger and the simulators. Dish LMC is deployed to either connect to its simulators or the physical devices through their TANGO interfaces. Based on the testing environment and the available component, one or more simulator(s) is switched on to connect to the real interface (see below).

Local Deployment

Dish LMC can be deployed either using docker-compose or kubernetes. For kubernetes deployments, Dish LMC uses the SKA Tango Operator to manage the its device servers as k8s resources.

Deploying Dish LMC using Docker Compose

The option to deploy Dish LMC using Docker Compose has been introduced specifically to cater to the needs of interfacing with Meerkat Dish Proxy for the DVS integration. Additionally, developers without kubernetes installed can use this to quickly test their changes.

The following commands deploy and tear down Dish LMC using docker compose:

# create the docker network

docker network create dvs-integration

# Deploy Dish LMC

docker compose -f docker-compose/tango-db.yml -f docker-compose/dish-lmc-devices.yaml up -d

# Teardown Dish LMC

docker compose -f docker-compose/tango-db.yml -f docker-compose/dish-lmc-devices.yaml down

The device servers can be accessed from either inside or outside the docker stack. The database host is exposed on port 10000. Also, there is a service (named cli) deployed along with the device servers to connect to.

# from the shell

$ docker exec -it cli bash

$ itango3

# from itango

In [1]: dp = DeviceProxy("mid-dish/dish-manager/SKA001")

# test comms to tango host

$ telnet localhost 10000

# start itango

$ TANGO_HOST=localhost:10000 itango3

In [1]: dp = DeviceProxy("mid-dish/dish-manager/SKA001")

# you can also access the server from your script

dp = DeviceProxy("tango://localhost:10000/mid-dish/dish-manager/SKA001")

Note

Ensure you have the network mode overwritten to match your created docker network before deploying.

Deploy Dish LMC from its repository

$ cd charts/ska-dish-lmc

$ helm upgrade --install dev . -n ska-dish-lmc -f ./custom_helm_flags.yaml

Note

ska-tango-base is deployed by default in this case, if you want to disable it

add --set ska-tango-base.enabled=false to the helm command.

Deploy Dish LMC from another repository

The ska-dish-lmc chart can be deployed from your own repository to include it as part of your own deployment process. This will also require setting some additional configuration in your values file.

# in dish_lmc_values.yaml

...

ska-mid-dish-manager:

enabled: true

dishmanager:

wms:

monitoring: true

station_ids: ["1"]

b5dcproxy:

monitoring: true

ska-mid-dish-simulators:

enabled: true

deviceServers:

spfdevice:

enabled: true

spfrxdevice:

enabled: true

dsOpcuaSimulator:

enabled: true

# Deploy an instance of the weather station tango

# device and a simulator server for it to connect to

ska-mid-wms:

enabled: true

ska-tango-base:

enabled: false

itango:

enabled: false

deviceServers:

wms:

enabled: true

station_ids: ["1"]

modbus_server_hostnames: ["wms-sim-1"]

modbus_server_ports: ["1502"]

simulator:

enabled: True

Pass extra variables to your make target to set the parameters for deployment.

make k8s-install-chart K8S_CHART_PARAMS='-f charts/ska-dish-lmc/values.yaml --set "global.dishes={001,111}"'

HELM_RELEASE=x.x.x K8S_CHART=ska-dish-lmc

Note

Again, ska-tango-base is not deployed by default, to deploy it add --set ska-tango-base.enabled=true

The table below explains the dish lmc deployment helm flags

Helm flag |

Description |

Example Values |

|---|---|---|

global.dishes |

Determine the number of DISH.LMC instances. The values match the DISH IDs |

{001}, {001,111} |

global.minikube |

Set to |

true, false |

global.operator |

Set to |

true, false |

ska-mid-dish-manager.dishmanager.<device>.fqdn |

Address to reach the device (ds manager, spf, spfrx) |

tango://127.0.0.1:45678/foo/bar/1#dbase=no |

ska-mid-dish-manager.dishmanager.<device>.family_name

|

The middle value in the tango triplet.

Note: The device name has to conform to ADR-9 e.g. mid-dish/simulator-spfc/SKA001

|

simulator-spfc, simulator-spfrx, spfc, spfrxpu-controller

|

ska-mid-dish-manager.dishmanager.wms.monitoring |

Enables/Disables monitoring of the weather station instances deployed |

true, false |

ska-mid-dish-manager.dishmanager.station_ids |

List wms tango devices instances dish manager should connect to |

[“1”, “2”, “3”] |

ska-mid-dish-manager.dishmanager.b5dcproxy.monitoring |

Enables/Disables monitoring of the B5DC proxy |

true, false |

ska-mid-dish-simulators.enabled |

Enable or disable the device simulators chart in dish-simulators repository |

true, false |

ska-mid-dish-simulators.deviceServers.spfdevice.enabled |

Enable or disable the SPF device simulator |

true, false |

ska-mid-dish-simulators.deviceServers.spfrxdevice.enabled |

Enable or disable the SPFRx device simulator |

true, false |

ska-mid-dish-simulators.dsOpcuaSimulator.enabled |

Enable or disable the OPCUA server |

true, false |

ska-mid-dish-manager.ska-mid-dish-ds-manager.dishstructuremanager.dsc.fqdns

|

List containing a Dish Structure Controller device FQDN for each instance of DISH.LMC to connect to

Note: The number of FQDNs specified must match the number of Dish IDs present in global.dishes

|

{opc.tcp://127.0.0.1:1234/OPCUA/SimulationServer, opc.tcp://127.0.0.1:5678/OPCUA/SimulationServer}

|

ska-tango-base.enabled |

Enable or disable the ska-tango-base dependency |

true, false |

ska-mid-wms.enabled |

Enable or disable the Weather Monitoring System (WMS) chart |

true, false |

ska-mid-wms.deviceServers.wms.station_ids |

List of tango device server instances to deploy (also corresponds to simulator IDs) |

[“14”, “2”,…] |

ska-mid-wms.deviceServers.wms.modbus_server_hostnames |

hostname/ip address of the running modbus servers (default sim hostnames are wms-sim-<station-id>) |

[“1.1.1.1”, “2.2.2.2”, …., “wms-sim-1”, “wms-sim-2”, …] |

ska-mid-wms.deviceServers.wms.modbus_server_ports |

port numbers of the running modbus servers (default sim port is 1502) |

[“8080”, “1502”, …] |

ska-mid-dish-b5dc-proxy.enabled |

Enable or disable the Band 5 downconverter proxy device |

true, false |

ska-mid-dish-dcp-lib.b5dcSimulator.enabled |

Enable or disable the Band 5 downconverter simulator |

true, false |

ska-mid-dish-b5dc-proxy.b5dcproxy.fqdn |

ip:port of the deployed down converter / simulator |

[127.0.0.1:1234] |

Cluster Deployments

Dish LMC in stfc, mid-psi, mid-itf

It is possible to deploy the chart in different clusters, namely, stfc-techops, mid-psi, and mid-itf clusters.

The pipeline has 6 different stages representing different deployment recipes of the chart to the clusters aforementioned, viz:

Pipeline Stage |

Devices Deployed |

Cluster |

|---|---|---|

deploy-to-stfc-techops

|

DishManager

DSMananger

Dish Structure Simulator

SPFRx Simulator

SPF Simulator

|

stfc

|

deploy-to-mid-psi

|

DishManager

DSMananger

SPFRx hardware (RxPU Controller)

Dish Structure Simulator

SPF Simulator

|

mid-psi

|

deploy-lmc-to-itf-karoo-sims

|

DishManager

DSMananger

SPFRx Simulator

Dish Structure Simulator

SPF Simulator

|

mid-itf

|

deploy-lmc-to-itf-spf

|

DishManager

DSMananger

SPF hardware (SPF Controller)

Dish Structure Simulator

SPFRx Simulator

|

mid-itf

|

deploy-lmc-to-itf-spfrx

|

DishManager

DSMananger

SPFRx hardware (RxPU Controller)

Dish Structure Simulator

SPF Simulator

|

mid-itf

|

deploy-lmc-to-itf-ds

|

DishManager

DSMananger

CETC DS Simulator

SPFRx Simulator

SPF Simulator

|

mid-itf

|

Note

The last 4 stages will deploy into the same cluster (mid-itf) but in four different k8s namespaces. See project README for the namespaces.

Each stage has 12 jobs with an additional job in the deploy-lmc-to-itf-spfrx stage to

register the SPFRx device in Dish LMC’s tango database. See table below for description:

Job |

Description |

|---|---|

create-namespace-stfc-techops |

Creates the namespace used by the rest of the jobs |

deploy-ska-tango-base-stfc-techops |

Deploys the ska-tango-base chart |

deploy-ska-dish-lmc-stfc-techops |

Deploys the Dish LMC devices |

deploy-taranta-stfc-techops |

Deploys Taranta charts (taranta and tangogql) |

gather-deployment-data-stfc-techops |

Gathers info about pods and containers running in the namespace |

smoke-test-stfc-techops |

Verify that LMC devices and the tango db are available |

integration-test-stfc-techops |

Run the set of integration tests |

output-logs-stfc-techops |

Acquire & output dish logger logs from the Dish LMC deployment in MID PSI cluster |

delete-namespace-stfc-techops |

Deletes the namespace (the entire gitlab environment) |

delete-ska-dish-lmc-stfc-techops |

Deletes the Dish LMC devices deployment |

delete-taranta-stfc-techops |

Delete the Taranta charts |

delete-ska-tango-base-stfc-techops |

Delete the ska-tango-base chart |

Note

the jobs are triggered manually and should be run in a specific order

the job names in the various stages differ only at the suffix (i.e.

stfc-techopsvsmid-psi)the available environments will be shown as gitlab environments

The definition of the deployments are available in the folder gitlab-ci/includes where for each cluster,

for each environment the values file applied is available. For example, the values file applied to the mid-psi

is available in the folder: gitlab-ci/includes/psi-mid/mid-psi-ska-dish-lmc/values.yaml.

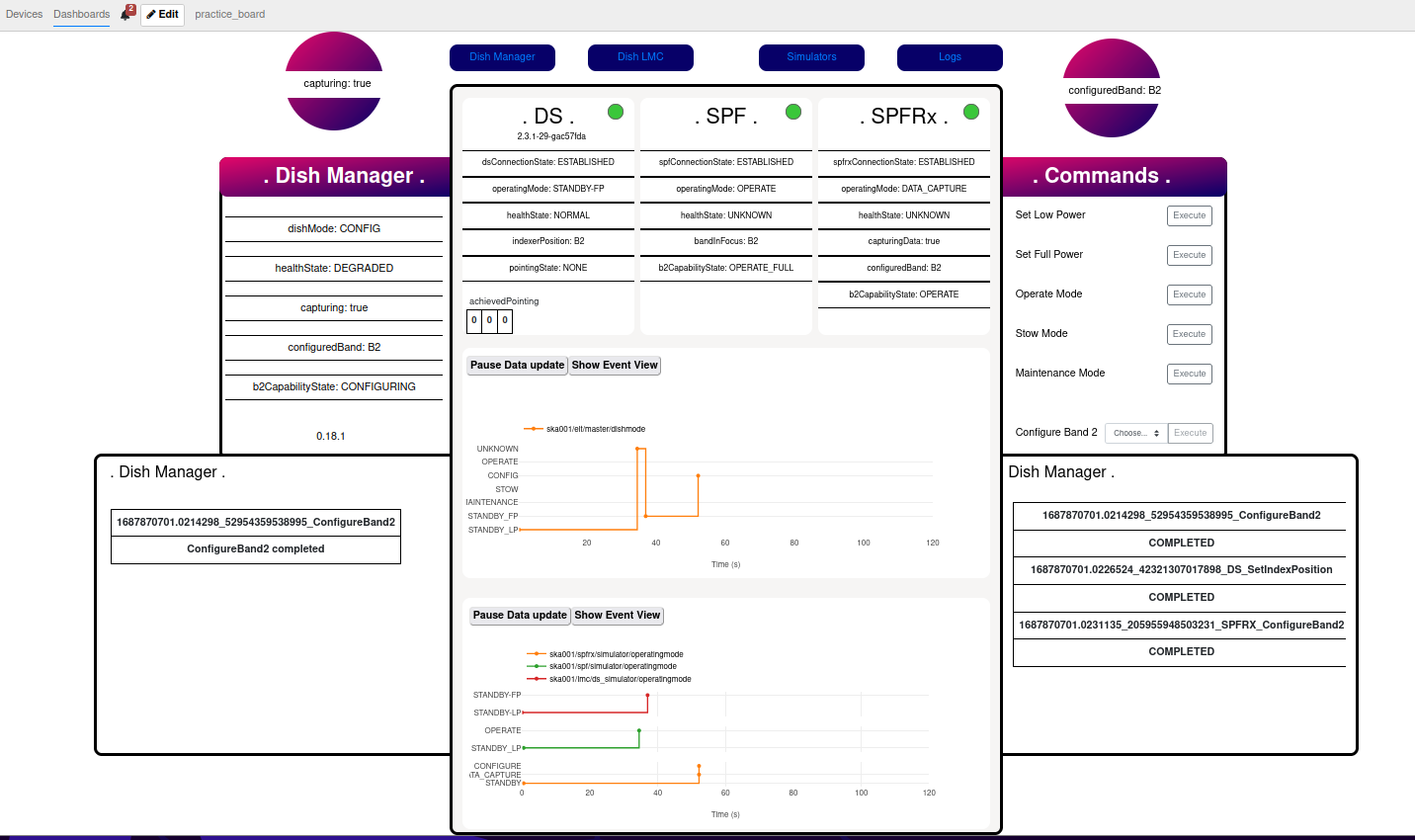

Taranta Dashboards

A monitoring and control Taranta dashboard for Dish LMC can be deployed (using the deploy-taranta-<cluster-name> manual job) in each of the Mid ITF

deployments and will be accessible via a url (see below) after substituting in the chosen deployment namespace.

url: https://k8s.miditf.internal.skao.int/<INSERT_NAMESPACE>/taranta/devices/

Additionally, engineering dashboards are available to be uploaded in the Taranta widget to control and monitor the Dish Structure, SPF and SPFRx (see screenshots of all the dashboards here). The dashboards include variables that allow you to change the device name and can be shared across namespaces. You can find documentation on how to use and update these variables at this link.

EDA Configuration

It is possible to deploy the Engineering Data Archive (EDA) tango devices alongside a Dish LMC deployment and configure the event subscriber to archive attributes to a running timescaleDB instance. To generate the required EDA configuration for a device, refer to this GitLab snippet.

Alternative to having a timescaleDB instance running in the ITF, an instance may be deployed locally by following the ska-tango-archiver guides.

The manual jobs to deploy and configure the EDA in the ITF can be found in the deploy-lmc-to-itf-karoo-sims stage.

The timescaleDB parameters used in the deployment job are set as environment variables on the

ska-dish-lmc pipeline while the Dish LMC configuration file is defined at eda-config/dish-lmc.yml.

Once deployed, and while on the ITF VPN, the archive configurator can be accessed at

http://configurator.miditf-lmc-003-karoo-sims.svc.miditf.internal.skao.int:8003/ and the

archive viewer at http://archviewer.miditf-lmc-003-karoo-sims.svc.miditf.internal.skao.int:8082/.