SKA Telescope Dish LMC

Dish LMC Software Overview

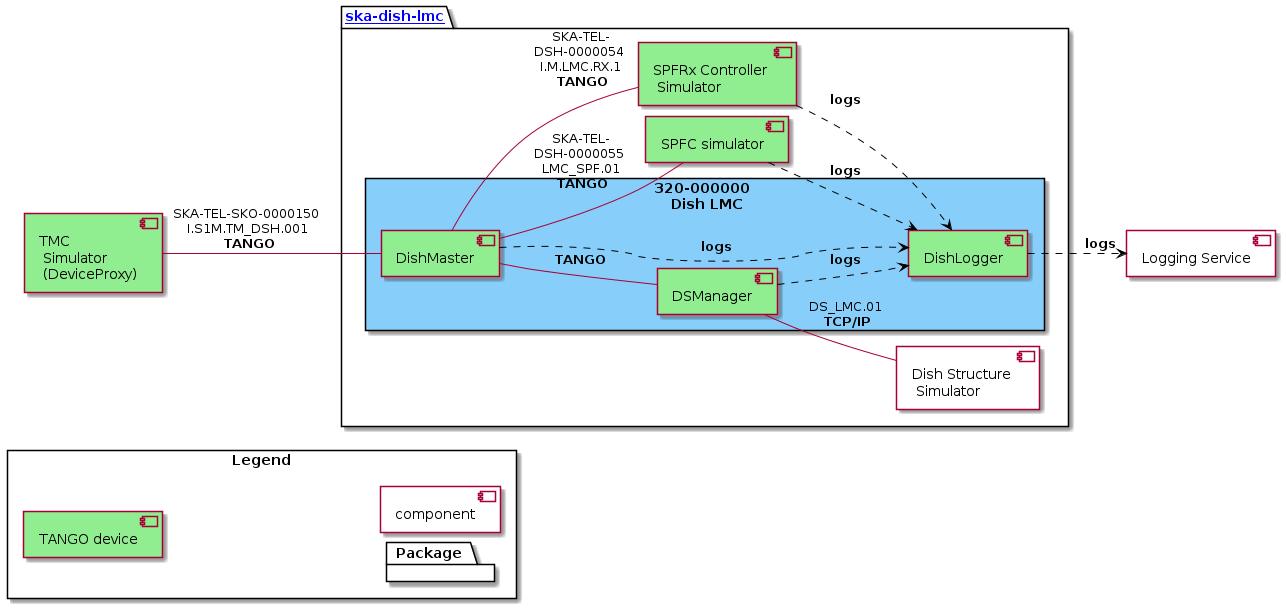

Dish LMC is the monitoring and control (M&C) system for the SKA MID Dish of the Square Kilometer Array (SKA).

It is built using Python and utilizes the Tango Controls toolkit. The system consists of three main components, namely:

Dish Manager (formerly known as DishMaster)

Dish Structure (DS) Manager

Dish Logger

To aid in testing we also have our own simulators.

SPF Controller Simulator and SPFRx Controller Simulator

Dish LMC Device context

The main entrypoint for TMC to control and monitor a dish is the DishManager device.

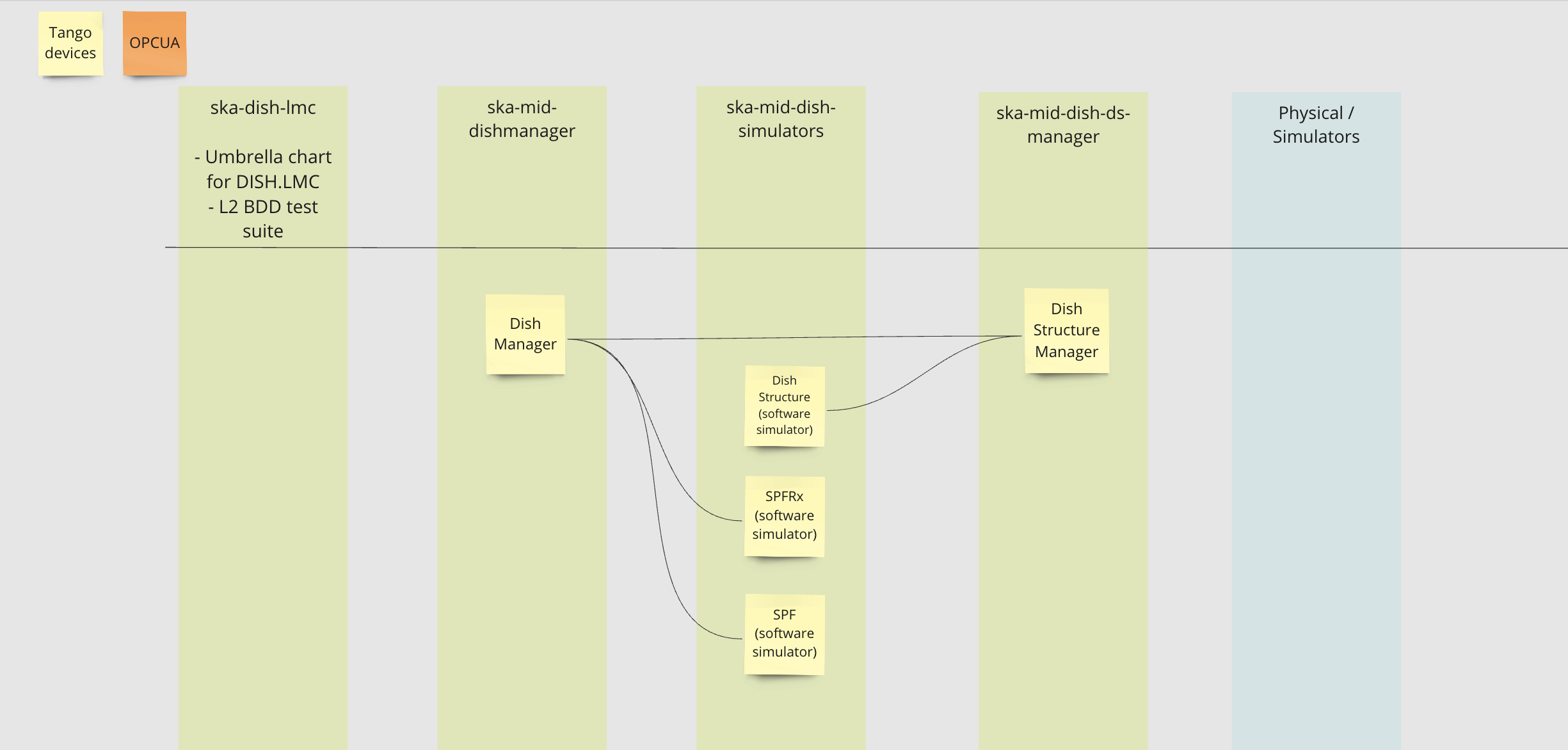

Repository layouts

Devices for full deployment

Devices for CI/CD

Additional deployments

Additional deployments can be found here: Deployments

Deployment

$ helm upgrade --install dev . -n ska-dish-lmc \

--set global.exposeDatabaseDS=true \

--set global.dishes="{001}" \

--set global.minikube=true \

--set global.operator=true \

--set ska-mid-dish-simulators.enabled=true \

--set ska-mid-dish-simulators.deviceServers.spfdevice.enabled=true \

--set ska-mid-dish-simulators.deviceServers.spfrxdevice.enabled=true \

--set ska-mid-dish-simulators.dsOpcuaSimulator.enabled=true \

ska-tango-base is not deployed by default, to deploy it add the –set below:

--set ska-tango-base.enabled=true

–set flag options

Option |

Description |

Example values |

|---|---|---|

global.dishes |

Determine the number of sets of DISH.LMC devices. |

{001}, {001, 002} |

global.minikube |

Set to true when deploying to minikube |

true, false |

global.operator |

Set to true to use ska tango operator for k8s deployment |

true, false |

ska-mid-dish-manager.dishmanager.<device>.fqdn |

Full path |

tango://127.0.0.1:45678/foo/bar/1#dbase=no |

ska-mid-dish-manager.dishmanager.<device>.family_name |

The middle value in the tango triplet |

spf, spfrx |

ska-mid-dish-manager.dishmanager.<device>.member_name |

The last value in the tango triplet |

simulator, controller |

ska-mid-dish-simulators.enabled |

Enable or disable the device simulators chart in dish-simulators repository |

true, false |

ska-mid-dish-simulators.deviceServers.spfdevice.enabled |

Enable or disable the SPF device simulator |

true, false |

ska-mid-dish-simulators.deviceServers.spfrxdevice.enabled |

Enable or disable the SPFRx device simulator |

true, false |

ska-mid-dish-ds-manager.ska-mid-dish-simulators.enabled |

Enable or disable simulators chart in ds-manager repository |

true, false |

ska-mid-dish-ds-manager.ska-mid-dish-simulators.dsOpcuaSimulator.enabled |

Enable or disable the OPCUA server |

true, false |

ska-tango-base.enabled |

Enable or disable the ska-tango-base dependency |

true, false |

Deployments

In the present repository it is possible to deploy the chart in different clusters. In specific it is possible to deploy in stfc-techops, psi-mid, and itf-mid clusters with 6 different stages which are: ‘deploy-to-mid-psi’, ‘deploy-to-stfc-techops’, ‘deploy-lmc-to-itf-spf’, ‘deploy-lmc-to-itf-karoo-sims’, ‘deploy-lmc-to-itf-spfrx’ and ‘deploy-lmc-to-itf-ds’. The last 4 stages will deploy into the same cluster (itf-mid) but in 4 different k8s namespaces.

For each stage, there are 12 jobs available which can create the ska-dish-lmc environment. There is an additional job in the ‘deploy-lmc-to-itf-spfrx’ stage to register the pyhsical SPFRx device in the ITF on the tango database. For example in the stfc-techops stage, there are:

Job |

Description |

|---|---|

create-namespace-stfc-techops |

Creates the namespace used by the rest of the jobs |

deploy-ska-tango-base-stfc-techops |

Deploys the ska-tango-base chart |

deploy-ska-dish-lmc-stfc-techops |

Deploys the Dish LMC devices |

deploy-taranta-stfc-techops |

Deploys Taranta charts (taranta and tangogql) |

gather-deployment-data-stfc-techops |

Gathers info about pods and containers running in the namespace |

smoke-test-stfc-techops |

Verify that LMC devices and the tango db are available |

integration-test-stfc-techops |

Run the set of integration tests |

output-logs-stfc-techops |

Acquire & output dish logger logs from the Dish LMC deployment in MID PSI cluster |

delete-namespace-stfc-techops |

Deletes the namespace (the entire environment) |

delete-ska-dish-lmc-stfc-techops |

Deletes the Dish LMC devices deployment |

delete-taranta-stfc-techops |

Delete the Taranta charts |

delete-ska-tango-base-stfc-techops |

Delete the ska-tango-base chart |

Please note that:

the jobs in the various stages differ only for the suffix (i.e. stfc-techops vs mid-psi).

the available environments will be shown as gitlab environments.

The definition of the deployments are available in the folder gitlab-ci/includes where, for each cluster, for each environment the values file applied is available. For examples the values file applied to the psi-mid is available in the folder: gitlab-ci/includes/psi-mid/mid-psi-ska-dish-lmc/values.yaml.

PSI MID specific

The deployment of the dish lmc will exclude the SPFRx Simulator.

ITF specific

There are 4 different deployments targetting the ITF, shown in the table below:

Stage |

Deployment details |

Cluster namespace |

Taranta link |

|---|---|---|---|

deploy-lmc-to-itf-ds |

DishManager + Dish Structure Simulator (CETC54) |

miditf-lmc-002-ds |

https://k8s.miditf.internal.skao.int/miditf-lmc-002-ds/taranta/devices |

deploy-lmc-to-itf-karoo-sims |

DishManager + Karoo software simulators |

miditf-lmc-003-karoo-sims |

https://k8s.miditf.internal.skao.int/miditf-lmc-003-karoo-sims/taranta/devices |

deploy-lmc-to-itf-spf |

DishManager + Physical SPF + Karoo software simulators |

miditf-lmc-004-spf |

https://k8s.miditf.internal.skao.int/miditf-lmc-004-spf/taranta/devices |

deploy-lmc-to-itf-spfrx |

DishManager + Physical SPFRx + Karoo software simulators |

miditf-lmc-005-spfrx |

https://k8s.miditf.internal.skao.int/miditf-lmc-005-spfrx/taranta/devices |

Binderhub

Binderhub is available to all deployments in the Mid ITF. Firstly, connect to the Mid ITF VPN where binderhub will then be accessible by going to the following url: https://k8s.miditf.internal.skao.int/binderhub/.

A JupyTango notebook can be created by building and launching the JupyTango gitlab repository (https://gitlab.com/tango-controls/jupyTango). The following details can then be used to access the tango database.

TANGO_HOST={DATABASEDS_NAME}.{KUBE_NAMESPACE}.svc.{CLUSTER_DOMAIN}:10000

For more information on Mid ITF environment variables see this page.

Taranta

Taranta is deployed in each of the Mid ITF deployments and is accessible through the following url after substituting in the chosen deployment namespace.

Pytest

Device FQDN arguments

The following arguments can be used with the pytest command to set the tango device FQDNs for the subservient devices.

<i>Note: These can be added to the PYTHON_VARS_AFTER_PYTEST variable in the makefile to set the FQDNs during pipeline runs.<i> | Device | Flag | |--------|————-| | Dish Manager | –dish-manager-fqdn | | Dish Structure | –dish-structure-fqdn | | SPF | –spf-fqdn | | SPFRx | –spfrx-fqdn |

Example

$ pytest -m "acceptance" tests/ --dish-structure-fqdn=some/test/fqdn

CI/CD deployment

Tango Device Servers documentation

These are all the Tango Device Servers currently included in the project:

package/DishManager

These devices allow to test main dish control monitoring and control functionalities using master device. Other devices forming part of the Dish Control system (e.g. alarm system or archiver) will be uploaded in the repository in the future.